How to remove referral spam in Google Analytics with a single segment

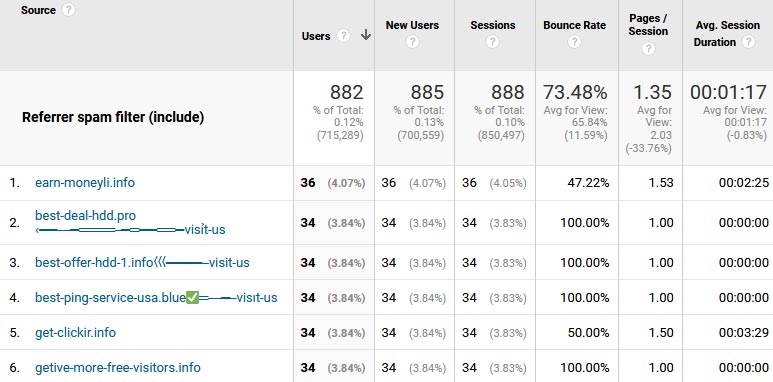

Probably everyone with a website, but for sure anybody who works with Google Analytics has seen it in their reports, referral spam. Visitors to your website from typical domains like the ones below.

Visitors with usually (but not always) a bounce rate of 100%, 1 page per session and a sessions duration of 0 seconds. Polluting the data in your reports. In case you’re curious about the 48 domain names I’ve identified so far, here’s the list in a Google Sheet.

What is referral spam?

The only purpose served by referral spam is to attract visitors to these domains and, once there, convince them to make use of their services. Really just an annoying form of online marketing.

I have a hunch that the same method is applied to their customers to create the illusion of an increase in traffic. However, as I have no data to prove that, I’ll just stop that train of thought right here.

What are the consequences of referral spam?

In terms of real world impact, the consequences are fairly limited. If it weren’t for the large numbers of fake visitors skewing the data and making it less and less useful.

All metrics in aggregate which you might find of interest are affected. For example bounce rate, pages / session and session duration. Which could very well lead to poor decision making as the data might be telling you a story that is quite different from reality.

How to identify referral spam in your reports

When it comes down to cleaning up the data, this is actually quite straightforward. By using filters, segments, you name it. But it’s a bit cumbersome to have to do this every time a new spam domain pops up. Hence this article.

In short, what I noticed is that there are specific characteristics that most of the spammy domains have in common, which makes it relatively easy to exclude them from reports.

Characteristic 1. They’re not real users

In the end the data in the reports is created by scripts. And not by actual users. As a consequence, certain user level variables are either left blank or populated incorrectly.

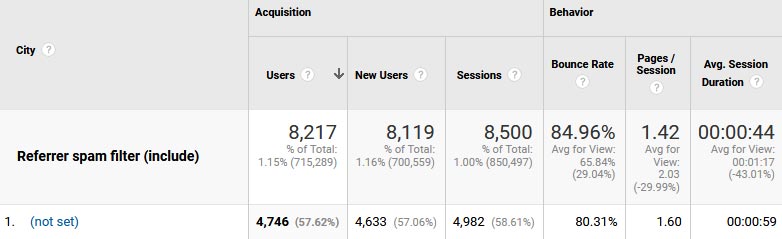

You can see this for example in the (not set) value for City and in some cases a typo in the screen resolution variable (like, for example 1920×1080&vp=1920×1080).

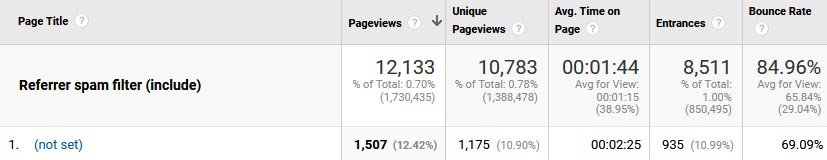

Characteristic 2. They’re not accessing actual content

As mentioned, it’s scripts triggering the data creation through the Google Analytics script on a website. And not the page loading itself. Which again results in variables being left blank. So a (not set) value for the Page title variable.

Assuming that your website does in fact have a page title for every page. If not, that’s a different problem that should be solved first.

Note: this variable might also be affected by an implementation of custom page view events. Be sure to check.

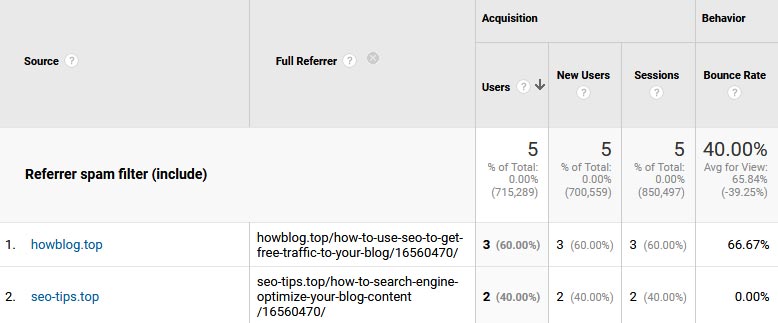

Characteristic 3. They’re data-driven marketeers

And data-driven marketeers like to quantify results of their campaigns. So when looking at the Full Referrer, you’ll soon notice a numerical value like 16560470 in the screenshot below.

Basically, (I’m assuming) this is your website’s own spam ID. Whenever you’ll follow this URL, the spammy marketeer will be able to attribute the visit to their campaigns.

These three points give you all the info needed to filter out most of the referral spam. Please note that you’ll catch a handful of non-referral spam visits as well. Unfortunately that’s the case. Hopefully a handful is not what you’re business depends on.

Remove referral spam from Google Analytics with segments

Rather than forcing you to create the segments yourself, here is the configuration of mine, which you can simply open and apply to your own analytics account:

- This (<- click here) is the segment configuration for inclusion of referral spam

- This (<- click here) is the segment configuration for exclusion of referral spam

Note: be sure to adjust the numerical values by checking the Full Referrer values in your own reports.

Did you know that you can also clean up Direct traffic spam?

Another type of traffic that gets inflated quite a bit is Direct traffic to your site. Here we’re not talking spam to attract traffic, but mostly automated scripts (bots) crawling your content.

Remove referral spam from Google Analytics with filters

What I noticed in my Google Analytics account are two network domains with a traffic pattern that could really only be automated. Direct traffic to single pages only, all from the same network domain. In principle the network domain variable should contain the domain names of internet providers.

Two domains that definitely aren’t internet providers, are Relativity.com and Amazonaws.com. They are already included in the segment configuration above.

Next to that, you can also turn on a filter for bots in the Admin area of your account. Go to Admin > View > Bot Filtering and select Exclude all hits from known bots and spiders (here’s the Google support page).

Block specific IP addresses

Two IP addresses that I’ve blocked via my .htaccess file, rather than via the robots.txt or in Google Analytics, are 23.101.169.3 and 23.100.232.233

These two IP addresses drove massive amounts of traffic to my sites coming from Chicago. Some further digging showed that they belong to Microsoft Azure. So not sure who is running what scripts from there, but it’s worth blocking as it seems like simply another bot.

How about the robots.txt file then?

The thing with the robots.txt file is that it is a perfect way to tell official bots what part of your content can and cannot be indexed. When it comes to non-official bots, these can simply ignore the file. So while it’s useful to configure it correctly for the crawl bots of search engines like Google, Bing and Baidu, it won’t prevent crawling by others.

Ok that’s all I’ve got for now. Hope you found it insightful, let me know if you have any additional tips to make sure Google Analytics data stays as clean as possible.

So where is the howto, i dont see a working howto actually?

you only state ‘this is the segment for…” it shows nothing?

Hi Rombout, thanks for your comment. There is a link behind ‘this’ that gives you the segment configuration straight in your GA account. Let me know if that works for you. Thijs